Hi,

I’m trying Sphinx on a very good computer that has these ressources :

- CPU i9-9940X 3.30GHz 14 Core

- GPU RTX 2080 11Go

- 32Go DDR

I’m under Ubuntu 18.04 with ubuntu-drivers-440 installed.

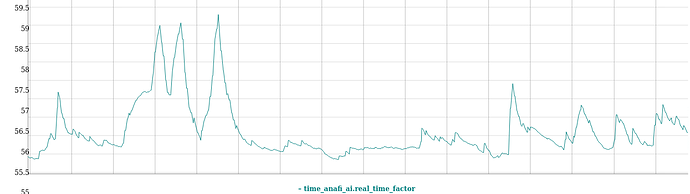

Unfortunately, on parrot-ue-empty, without any action (no take off, etc), I have a very low realtime factor , around 58% :

I can see using nvidia-smi that UE and gazebo are both using my GPU :

nvidia-smi

Thu May 5 17:32:28 2022

+-----------------------------------------------------------------------------+

| NVIDIA-SMI 440.33.01 Driver Version: 440.33.01 CUDA Version: 10.2 |

|-------------------------------+----------------------+----------------------+

| GPU Name Persistence-M| Bus-Id Disp.A | Volatile Uncorr. ECC |

| Fan Temp Perf Pwr:Usage/Cap| Memory-Usage | GPU-Util Compute M. |

|===============================+======================+======================|

| 0 GeForce RTX 208... On | 00000000:B3:00.0 On | N/A |

| 31% 46C P0 60W / 250W | 2473MiB / 11018MiB | 37% Default |

+-------------------------------+----------------------+----------------------+

+-----------------------------------------------------------------------------+

| Processes: GPU Memory |

| GPU PID Type Process name Usage |

|=============================================================================|

| 0 1267 G /usr/lib/xorg/Xorg 18MiB |

| 0 1314 G /usr/bin/gnome-shell 73MiB |

| 0 5269 G /usr/lib/xorg/Xorg 18MiB |

| 0 5402 G /usr/bin/gnome-shell 254MiB |

| 0 13560 C+G ...e4-empty/Empty/Binaries/Linux/UnrealApp 1837MiB |

| 0 13991 G gzserver 9MiB |

| 0 18984 G /usr/lib/xorg/Xorg 142MiB |

| 0 19077 G /usr/bin/gnome-shell 106MiB |

+-----------------------------------------------------------------------------+

Any idea to check why it is so slow ?

Best,

Clément